When was the last time you clicked a button on a website or completed an online purchase almost effortlessly? That seamless experience wasn’t accidental — it was the result of deliberate design choices guided by A/B testing for conversion rates. Behind every intuitive click, clear call-to-action, and persuasive layout lies data-driven experimentation that helps marketers and product designers understand exactly what works best for users.

If you’ve ever wondered why your website traffic isn’t translating into leads or sales, or you’re unsure how to boost your conversion rate, A/B testing could be the key to unlocking your site’s true potential. In this blog post, we’ll break down what A/B testing for conversion rates really means, explore how it enhances conversion optimization, and share proven strategies to implement it effectively as part of your broader marketing and UX strategy.

What Is A/B Testing?

The purpose of A/B testing for conversion rates (also known as split testing) is to compare two or more versions of a webpage, email, or ad and see which one performs better. In essence, you show one group of users version “A” and the other version “B”, then observe which performs better against your chosen metric.

Metrics to test can include:

- Click-through rate (CTR): How often users click on a button, link, or ad.

- Conversion rate: How many users complete a desired action, such as signing up, purchasing, or downloading.

- Bounce rate: How many users leave a page without taking any action.

By implementing the winning variation, you can make data-backed decisions and drive better results without resorting to guesswork.

Why A/B Testing Is Crucial for Conversion Rate Optimization

Want more sign-ups? Higher sales? Fewer abandoned carts? A/B testing for conversion rates helps pinpoint what works and what doesn’t, transforming the user experience into one that guides your audience smoothly toward conversion.

Here are some key reasons why A/B testing for conversion rates is a game-changer for conversion rate optimization (CRO):

- Data-Driven Decisions: Stop guessing and start acting on evidence of what your audience responds to.

- Customization: Tailor your website or campaigns to your audience’s preferences.

- Cost Efficiency: Small tweaks made through A/B testing for conversion rates can lead to big returns over time.

- Reduced Risk: By testing incremental changes, you minimize the risk of turning users away with drastic updates that fail.

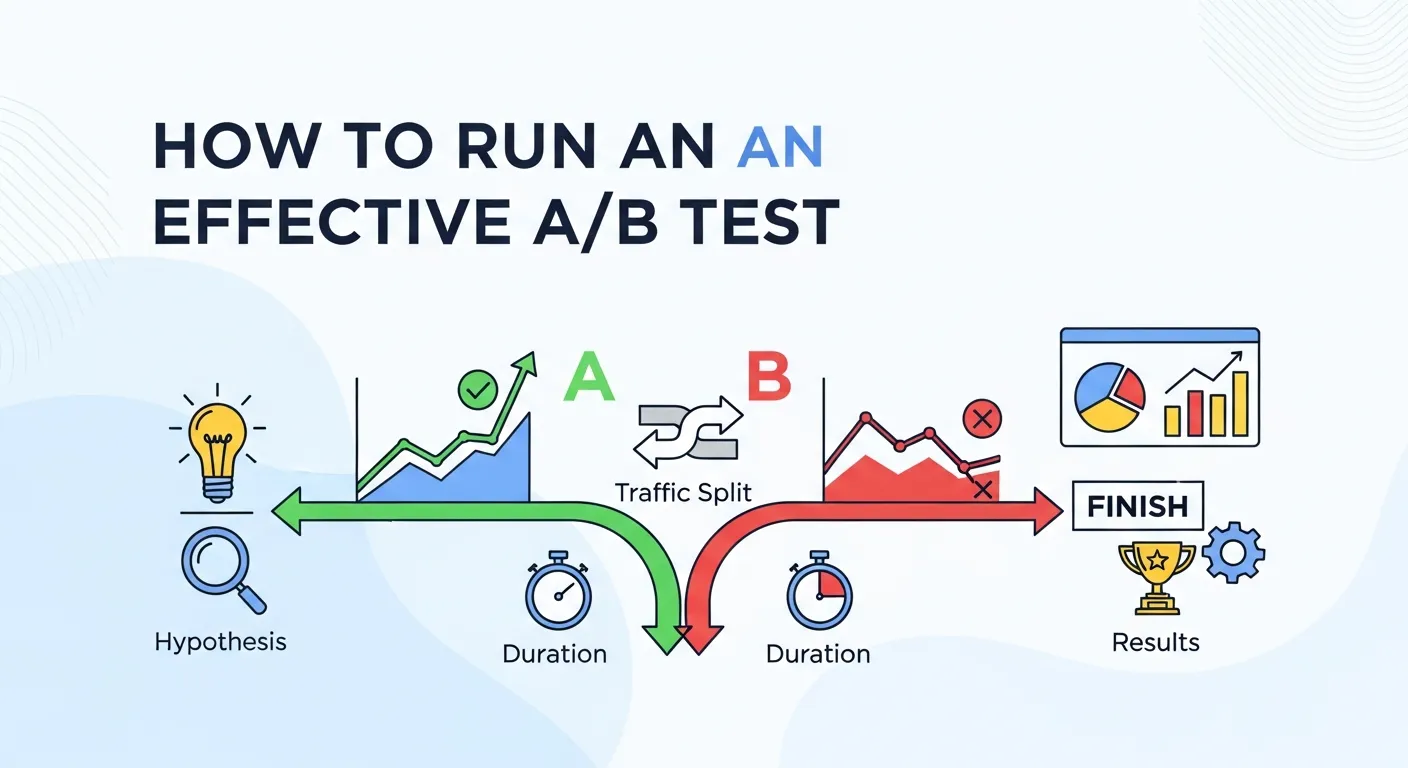

How to Run an Effective A/B Test

Running a successful A/B test requires more than swapping a button color or changing a headline. It’s a structured, data-driven process designed to uncover what truly resonates with your audience. Follow these steps to ensure your A/B testing for conversion rates delivers actionable insights:

1. Define Your Goals

Start by clarifying the specific objective of your test. Are you aiming to:

- Increase email signups?

- Drive more purchases?

- Reduce cart abandonment?

Well-defined goals guide what you test, how you measure success, and help you avoid chasing vanity metrics. The clearer your objective, the more impactful your results will be.

2. Identify What to Test

Almost any element of your website or app can be tested, but prioritizing key areas often yields the best results:

- Headlines and Copy: Will a bold, attention-grabbing claim outperform a subtle, informative one?

- Call-to-Action (CTA): Experiment with wording, color, size, and placement. Does “Get Started” convert better than “Sign Up Now”?

- Page Layouts: Test rearrangements, whitespace, or minimalist layouts to enhance readability and engagement.

- Visuals: Images, videos, and fonts influence perception and emotion—small changes can have big impacts.

- Checkout Process: Simplifying steps, reducing form fields, or highlighting trust signals can significantly improve conversions.

3. Create Hypotheses

A/B testing for conversion rates isn’t random; it’s about validating assumptions. For each change, write a hypothesis such as:

- “If we use a larger CTA button, the click-through rate will increase.”

- “Replacing stock images with authentic team photos will improve conversions.”

Hypotheses keep your tests focused and help interpret results meaningfully.

4. Split Your Audience

Divide your traffic into random, equal groups to ensure accurate comparisons:

- Group A: Sees the original version.

- Group B: Sees the variation.

A sufficient sample size is crucial—small audiences can produce unreliable results. Tools like Optimizely, VWO, or Google Optimize ensure proper randomization and segmentation.

5. Run the Test for Enough Time

Avoid the common mistake of ending a test too soon. Consider:

- Traffic volume: More visitors mean faster, more reliable results.

- Statistical significance: Ensure the difference between versions isn’t due to chance. Most testing tools calculate this automatically.

Running the test long enough ensures confidence in your findings and avoids costly misinterpretations.

6. Analyze the Results

Focus on the metrics tied to your original goal. Did version B outperform version A? Or did performance remain similar?

- Successes validate your assumptions.

- Failures are equally valuable—they reveal what doesn’t work and inform your next iteration.

7. Implement the Winning Variation

Once a winner emerges, roll it out across your platform. But remember, A/B testing for conversion rates. Use each test as a learning opportunity to continuously optimize your website, refine user experiences, and boost conversion rates over time.

A/B Testing Across Different Marketing Channels

A/B testing isn’t just for websites — it’s a versatile strategy that can optimize virtually every marketing touchpoint. Whether your audience interacts with your brand through email, search engines, social media, or content platforms, consistent testing ensures every interaction drives maximum engagement and conversions.

Key channels to consider:

-

Email Marketing: Email campaigns are ideal for A/B testing for conversion rates because even small changes can significantly impact open rates and click-through rates. Experiment with subject lines, preheaders, sender names, and CTA buttons. For example, testing a personalized subject line versus a generic one can reveal which approach drives higher engagement. Learn more about email-specific experiments.

-

Search Engine Marketing (SEM): Paid search campaigns benefit from A/B testing for conversion rates on ad copy, headlines, display URLs, and landing pages. Even subtle variations, like changing the phrasing of a CTA or using different value propositions, can lower cost-per-click and increase conversions. Smart SEM experiments help marketers identify which elements resonate with users and generate the highest ROI, as outlined learn more about here a-b-testing-in-sem

-

Social Media Ads: Platforms like Facebook, Instagram, and LinkedIn allow you to test creative visuals, ad copy, formats, and audience targeting. For instance, testing an image-focused ad against a video ad can reveal which format captures more attention and drives clicks. Segmenting your audience ensures you reach the right users with the most effective messaging.

-

Content Marketing: Headlines, featured images, blog layout, and embedded CTAs can all be optimized through A/B testing for conversion rates. A compelling headline might increase click-through to a landing page, while strategic CTA placement can boost lead generation.

Benefits of multi-channel testing:

- Holistic view of your audience behavior across platforms.

- Consistent messaging that guides users toward conversion.

- Improved ROI by focusing efforts on channels that deliver measurable results.

By applying A/B testing for conversion rates across these channels, you create a cohesive strategy that not only improves performance but also provides actionable insights that inform your broader marketing decisions.

Advanced A/B testing for conversion rates techniques

Once you’ve mastered the basics, advanced A/B testing for conversion rates techniques allow you to uncover deeper insights, optimize multiple variables simultaneously, and make more impactful decisions. These methods are especially useful for companies looking to refine user experiences at a granular level.

Popular advanced methods include:

- Multivariate Testing: Test multiple elements on a single page or email to understand which combination works best. For example, testing different headlines, images, and CTA buttons together can reveal the perfect formula for conversions.

- Split URL Testing: Compare completely different page layouts hosted on separate URLs. This is ideal when testing major redesigns or entirely new landing pages, as it allows you to evaluate how structural differences affect user behavior.

- Behavioral Segmentation: Break down your audience into segments, such as mobile vs. desktop users, new vs. returning visitors, or geographic regions. Segment-specific testing ensures your website or campaign resonates with each unique audience.

- Sequential Testing: Run variations over time to account for seasonal trends, time-based behavior, or campaign cycles. This method is particularly useful for measuring the long-term effectiveness of changes.

| Technique | Purpose | Best Use Case |

|---|---|---|

| Multivariate Testing | Evaluate combinations of multiple elements | Homepage redesigns or product pages |

| Split URL Testing | Compare completely different layouts | Landing page experiments |

| Behavioral Segmentation | Understand audience-specific behavior | Mobile vs. desktop, new vs. returning users |

| Sequential Testing | Detect trends over time | Seasonal campaigns or promotions |

Why use advanced techniques?

- Identify subtle performance improvements that single-variable testing may miss.

- Understand audience preferences more deeply, enabling targeted personalization.

- Make data-driven decisions for complex campaigns without relying on guesswork.

For marketers seeking a complete understanding of A/B testing for conversion rates in digital marketing, including how to structure experiments and interpret results, this guide offers comprehensive insights: A/B Testing in Digital Marketing.

By combining these advanced strategies with iterative testing, businesses can continuously optimize experiences, increase conversion rates, and make smarter, evidence-based marketing decisions.

Measuring Success and Iterating

Running A/B testing for conversion rates is only valuable if you know how to measure success, interpret results correctly, and iterate on your findings. Without a clear framework for measurement, even the best tests can fail to deliver actionable insights. By systematically analyzing performance, you can turn each experiment into a stepping stone toward higher conversion rates and better user experiences.

Key Metrics to Track

To fully understand the impact of your tests, monitor both direct and indirect metrics:

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Conversion Rate | Percentage of users completing a desired action (signup, purchase, download) | Direct indicator of test success |

| Click-Through Rate (CTR) | Frequency of clicks on buttons, links, or CTAs | Shows how compelling your messaging is |

| Engagement Metrics | Time on page, scroll depth, bounce rate | Helps you understand user behavior and content effectiveness |

| Revenue Metrics | Average order value, revenue per visitor | Measures the financial impact of your optimizations |

| Customer Retention | Repeat visits or purchases | Indicates long-term impact of UX improvements |

The Key to Continuous Growth

One A/B testing for conversion rates is never enough. Successful marketers treat testing as an ongoing cycle rather than a one-time activity. Each experiment provides insights that inform the next:

- Identify Winning Variations: Apply the version that outperforms others across key metrics.

- Analyze Secondary Insights: Sometimes, the losing variation reveals hidden opportunities, like better engagement among a niche audience segment.

- Segment Results: Break down data by device type, location, or user behavior. What works for mobile users may not work for desktop users.

- Generate New Hypotheses: Based on your findings, create new tests to refine messaging, layout, or functionality further.

- Test Incrementally: Small, iterative changes compound over time, gradually improving overall conversion rates without overwhelming users.

Real-World Example

Consider an e-commerce website experimenting with its checkout process. The original version had a multi-step checkout form. The variation simplified the form to a single page with fewer fields.

- Result: The variation reduced cart abandonment by 22% and increased completed purchases.

- Secondary Insight: Mobile users responded even better, suggesting future tests should focus on mobile UX improvements.

This shows how measuring results and iterating can uncover insights that go beyond the original goal, enabling smarter decisions in multiple areas of the business.

For marketers seeking to dive deeper into digital marketing experimentation and strategies, this guide offers comprehensive insights: What is A/B Testing in Digital Marketing.

Best Practices for Iterative Testing

-

Document Everything: Keep track of hypotheses, results, and lessons learned for future tests.

-

Focus on Actionable Metrics: Prioritize metrics that impact your business goals directly.

-

Avoid Over-Optimization: Test strategically; don’t tweak every small element at once.

-

Communicate Results: Share findings with your team to ensure organizational learning.

By combining careful measurement, audience segmentation, and iterative improvements, A/B testing for conversion rates becomes a powerful engine for growth. Over time, even minor, data-driven adjustments compound into major improvements in revenue, user satisfaction, and engagement.

Common Mistakes to Avoid When A/B Testing

While A/B testing for conversion rates can deliver incredible insights, avoid these pitfalls that could skew your results:

- Testing Too Many Variables at Once: If you change the button color, headline, and layout all at once, it’ll be impossible to isolate what caused the improvement (or downturn).

- Small Sample Sizes: Testing on a dozen users won’t give you the clarity needed to make robust decisions. Reliability increases with statistically significant sample sizes.

- Stopping the Test Too Early: Even if version B takes an early lead, give your test enough time to account for factors like cyclical traffic patterns or different user behaviors over time.

- Ignoring Segmentation Insights: Segment your audience if needed. What works for one group (e.g., mobile users) might differ for another (e.g., desktop users).

Tools to Simplify A/B Testing

Implementing A/B testing for conversion rates may sound complex, but with the right tools, it’s easier than you think. Here are some popular options:

- Google Optimize (Free): A straightforward tool for testing simple changes.

- Optimizely (Paid): Ideal for enterprises looking for advanced features like multivariate testing.

- Crazy Egg (Paid): Visual heatmaps paired with A/B testing tools to better understand user behavior.

- VWO (Paid): A user-friendly platform for A/B, multivariate, and split URL testing.

Real-Life Example: How A/B testing for conversion rates Boosted Conversion Rates

The team at Unbounce provides an example of A/B testing for conversion rates. By simplifying their landing page headline and call-to-action(CTA), they saw their conversions improve an impressive 41 percent. Results showed that a shortened and more on-point landing page did better than longer, more content-rich pages for the particular target audience.

This is just one instance of how experimentation via split-testing might produce surprising and powerful results.’

Start Optimizing with A/B testing for conversion rates Today

The power of A/B testing for conversion rates can work for companies of all shapes and industries. You can collect strong data by trying different designs, notes, and overall setups; this both increases your conversion ratio and makes things that your target audience will find most rewarding to look at.

The secret to increasing conversions is to take your brain out of the equation. Use the right tools, set goals, and continue testing small steps in order to make big changes happen. Remember, after you test and the numbers show a winner, go back into those better benefits–and watch for pockets of improvement that didn’t exist before.

Frequently Asked Questions (FAQ) About A/B Testing for Conversion Rates

1. What is A/B testing, and why is it important for conversion rate optimization?

A/B testing, also known as split testing, involves comparing two or more versions of a webpage, email, or ad to determine which performs better against a specific metric. It allows marketers to make data-driven decisions, reduce guesswork, and improve conversion rates by understanding what resonates best with their audience.

2. How do I decide which elements to test in A/B experiments?

Prioritize elements that have the highest potential impact on conversions, such as headlines, call-to-action buttons, page layouts, visuals, and checkout processes. Start small, focus on high-traffic areas, and iterate based on results to optimize efficiently.

3. How long should I run an A/B test?

Tests should run long enough to achieve statistical significance, accounting for traffic volume, seasonal fluctuations, and user behavior. Ending a test too early may produce misleading results. Use A/B testing tools like Optimizely or Google Optimize to ensure proper timing.

4. How can I analyze A/B test results effectively?

Focus on metrics aligned with your goals, such as conversion rate, click-through rate, bounce rate, and revenue per visitor. Compare variations using statistical significance to ensure differences are meaningful. Analyze secondary insights, as losing variations may reveal unexpected opportunities.

5. Can A/B testing improve other areas of marketing besides websites?

Absolutely! A/B testing can enhance email campaigns, SEM ads, social media campaigns, and content marketing. For instance, experimenting with subject lines or ad copy can significantly boost engagement. For a career-focused perspective, you can explore building a career in CRO strategies to understand broader applications.

6. What are common mistakes to avoid in A/B testing?

- Testing too many variables simultaneously.

- Using small sample sizes that lack statistical significance.

- Ending tests prematurely.

- Ignoring audience segmentation.

Avoiding these mistakes ensures reliable, actionable insights and more accurate conversion optimization.

7. How do I prioritize which A/B tests to run first?

Focus on high-impact areas that affect core business goals, like checkout funnels, lead generation forms, or landing page CTAs. Evaluate potential traffic, expected lift, and business value before planning experiments to maximize ROI efficiently.

8. Can A/B testing boost ROI for my business?

Yes! Even small improvements in conversion rates can have a large impact on revenue. Iterative testing allows companies to optimize campaigns continuously and identify the most effective strategies. Learn more about actionable strategies in CRO strategies to take your business to the next level.

9. How advanced should my A/B testing strategy be?

Advanced techniques, such as multivariate testing, split URL testing, and behavioral segmentation, are ideal once basic tests provide consistent results. These strategies allow for fine-tuned optimization and deeper insights into audience behavior. For more complex approaches, check advanced CRO strategies for business growth.

10. How often should I run A/B tests?

Testing should be an ongoing process, not a one-time task. Continuous experimentation allows you to adapt to evolving audience behavior, seasonal trends, and new business goals. Iterative testing ensures your website or campaigns remain optimized over time.